Agentic Systems

11 min readKeel: The Leash for Agentic SDLC Management

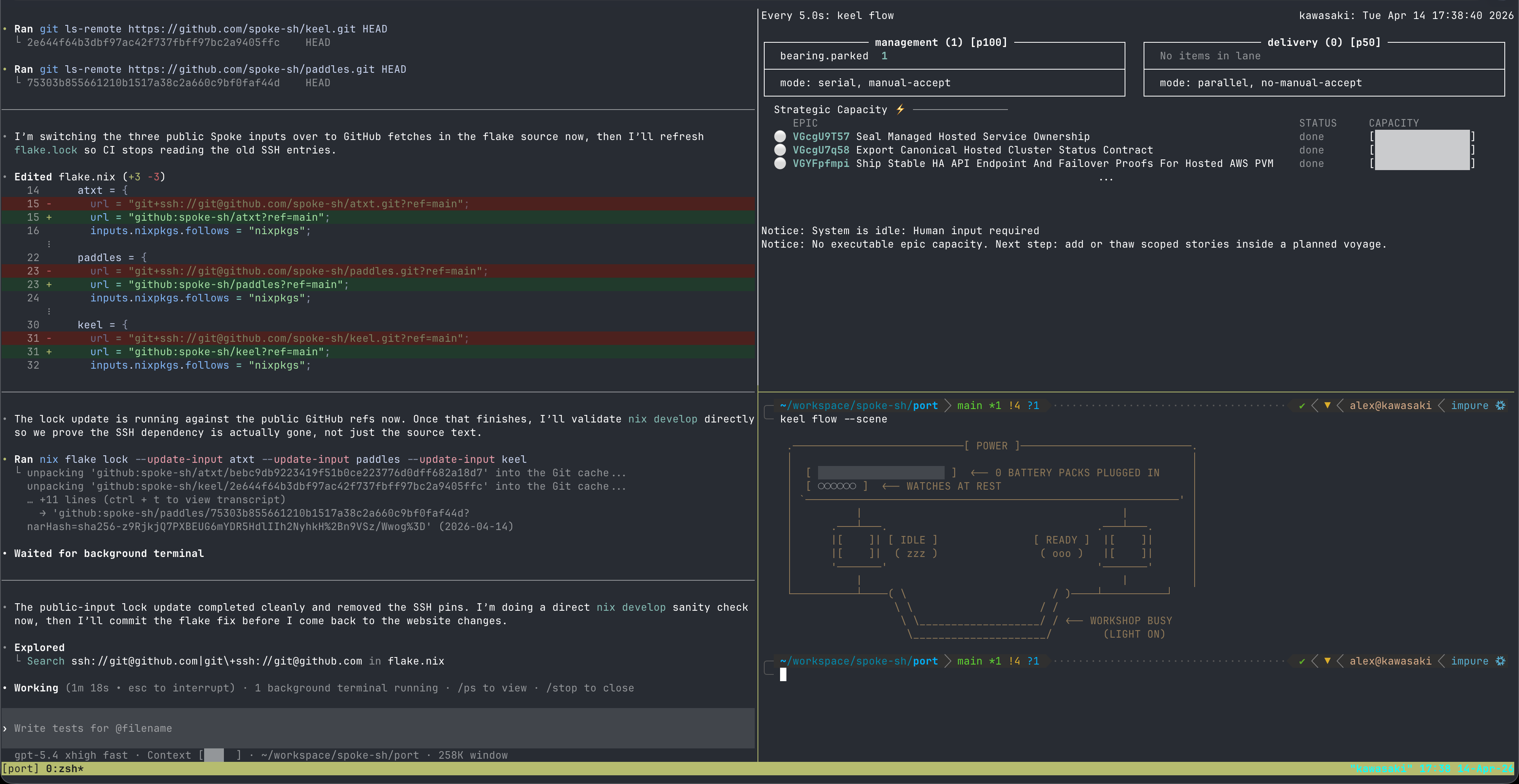

The model and the harness cover two-thirds of agentic engineering. The missing layer is the host: a CLI state machine that enforces shared physics for both humans and agents. High-fidelity CLI output: flow dashboards, knowledge graphs and structured status is not decoration. It's how agents read board state without hallucinating the parts they cannot see.

2026-04-03

Most conversations about agentic engineering focus on two things: the model and the harness. The model reasons, writes code, and makes decisions. The harness orchestrates tool calls, manages context, and coordinates multi-step workflows. Together, they're powerful. Together, they're still missing something.

The missing layer is the host. Not the model. Not the harness. The persistent, rule-enforcing system that governs what work is valid, what evidence is required, and what constitutes done: regardless of who or what is doing the work.

Without a host that enforces shared physics, you don't have a governed system. You have a capable-but-unaccountable agent.Keel is that host. It's a CLI state machine that both humans and agents must operate through. The leash isn't a constraint on what the model can reason about it's a guarantee of what the system will enforce.

The model and harness cover two-thirds of the picture

A language model brings extraordinary capability: it can read a codebase, understand a spec, write a solution, explain a tradeoff, and navigate ambiguity. A harness brings coordination: it structures tool calls, manages the context window, routes subtasks, and keeps the agent loop running.

But neither answers the foundational questions that govern a software delivery team:

- What rules constrain the work? Not prompt-level suggestions, structural invariants that persist across sessions and actors.

- What constitutes done? Not the model's assertion that it's complete, verifiable evidence the system can check.

- Where is human judgment required? Not inferred from context, explicit gates that halt execution until a human decides.

- What's the shared state? Not reconstructed from a context window: persistent, git-auditable truth that survives session boundaries.

Without a host layer that answers these questions structurally, every session starts with renegotiation. The model re-reads the codebase. The harness reconstructs intent from conversation history. Humans re-verify things they already approved. The agent loop restarts from scratch.

This isn't a capability problem. It's a governance problem.

The design pattern: CLI state machine as host

The pattern Keel implements is deceptively simple: every actor, human or agent interacts with the board through the same CLI state machine. The machine defines the physics. The machine enforces the transitions. The machine records the evidence. Neither the model's capability nor the harness's coordination changes what the machine will allow.

This is the fundamental shift. You're not constraining the agent through prompting ("remember to check the ADRs before implementing"). You're constraining it through structural enforcement that the state machine applies at transition time before any work proceeds.

In Keel, this looks like:

# The same turn loop for humans and agents

keel turn # orient: inspect charge, health, flow, and doctor

keel next --role operator # pull: role-scoped work from the delivery lane

keel story start # begin: transitions the story to in-progress

keel story record # proof: attach verifiable evidence to the story

keel story submit # close: gate check — all ACs must have proofs The agent doesn't get a special path. It uses the same commands, hits the same gates, and produces the same evidence as a human operator. The shared vocabulary is the point. When any observer runs keel flow, they see the exact same board state regardless of who executed the last turn. There's no side door, an agent that tries to skip a gate doesn't get a degraded experience, it gets a hard rejection from the same machine a human would hit.

ADRs as physics

The clearest demonstration of host-layer governance is the Architecture Decision Record. In Keel, an ADR in proposed state is a blocking constraint. Work in the governed bounded context halts, not because the model was told to check for ADRs, but because the state machine rejects the transition.

# .keel/adrs/VDuAohPEw/README.md (frontmatter)

id: VDuAohPEw

index: 2

title: Authentication and Crate Boundary

status: proposed # ← work in governed context is structurally blocked

context: null

applies-to: []

decided_at: 2026-03-14T20:58:02A proposed ADR covering authentication means no agent can start a story that touches the auth boundary. keel next --role operator won't surface that work. keel doctor will flag architectural drift if the agent tries to proceed. The machine won't allow the transition.

This matters because it changes what the human needs to supervise. You're not reading every pull request hoping the agent respected the architectural decision you mentioned in a system prompt three sessions ago. You're looking at a binary gate: the ADR is accepted or work doesn't happen. The state machine holds the invariant across every session, every agent, every harness.

Transitions require evidence, not assertions

The most common failure mode in agentic development is the confident wrong answer. The model completes a task, asserts it's done, and moves on. The harness accepts the assertion and records completion. Nobody checks the evidence because there's no structural requirement for evidence to exist.

Keel's Verified Spec Driven Development (VSDD) changes this at the state machine level. A story cannot transition from in-progress to needs-human-verification without all acceptance criteria having recorded verification proofs. The model can't assert completion. It must demonstrate it.

# Record a proof before submission is allowed

keel story record # attach evidence: test output, screenshots, cast

# Attempt to submit without proofs — the gate rejects it

keel story submit

# → Error: acceptance criteria AC-01 has no recorded verification proof

# All acceptance criteria must have evidence before the story can be submitted The agent loop adapts to this naturally. Instead of asserting "I implemented the feature," it must run the verification, capture the output, record it against the specific acceptance criterion, and then submit. The harness coordinates this. The state machine enforces it. The human reviewing the submitted story sees a traced chain from implementation to acceptance criteria to recorded proof, not a summary the model wrote.

This is a different kind of trust. You're not trusting the model's judgment about completeness. You're trusting the state machine's check that the evidence exists.

Separating human judgment from agent execution

One of the persistent tensions in agentic development is knowing when to involve the human. Involve them too much and you lose the leverage. Involve them too little and you accumulate invisible drift that surfaces only when something breaks in production.

Keel resolves this through a 2-queue pull model with lane topology. The management lane holds decisions that require human judgment: proposed ADRs, stories awaiting acceptance, voyages needing planning. The delivery lane holds work agents can execute: backlog stories, in-progress stories. The two lanes don't intersect—a manager role never returns implementation work, an operator role never returns governance decisions.

# Human pulls from the management lane — never gets implementation work

keel next --role manager

# → accept: Story VE3IAG4jZ needs your verification

# Agent pulls from the delivery lane — never gets governance decisions

keel next --role operator

# → work: Story VDmdk1uib is ready to start

# The flow dashboard surfaces both lanes simultaneously

keel flow --scene

# MANAGEMENT accept(3) research(1) decompose(0)

# DELIVERY in-progress(1) backlog(4)The model and harness don't decide what's appropriate for human review, the lane topology does, and it's defined in keel.toml by the operator who sets up the system. Agents operate at full velocity in the delivery lane. They halt naturally at management-lane gates without needing a prompt to tell them to stop.

This is where CLI visual fidelity starts earning its keep. The keel flow --scene dashboard isn't cosmetic. It answers the question any participant in the system, human or agent, needs answered at a glance: where is the board right now, and what requires attention? Management lane queue depths, delivery lane progress, and blocking conditions all surface in a single terminal render. An agent orienting at the start of a session reads this output and has an accurate picture of system state without querying a separate dashboard, opening a browser, or asking the human for a status update.

High-fidelity CLI output matters for agentic workflows in a way it never quite did for purely human ones. A human can squint at abbreviated output, follow a link, or ask a clarifying question. A model parsing terminal output to construct its next action cannot. Every ambiguity in the output is an opportunity for the model to misread board state and proceed on a wrong assumption. Clear visual structure, labeled sections, explicit counts and unambiguous status indicators reduces that surface area. The CLI becomes the shared language, and its precision directly determines how reliably both humans and agents can act on what it says.

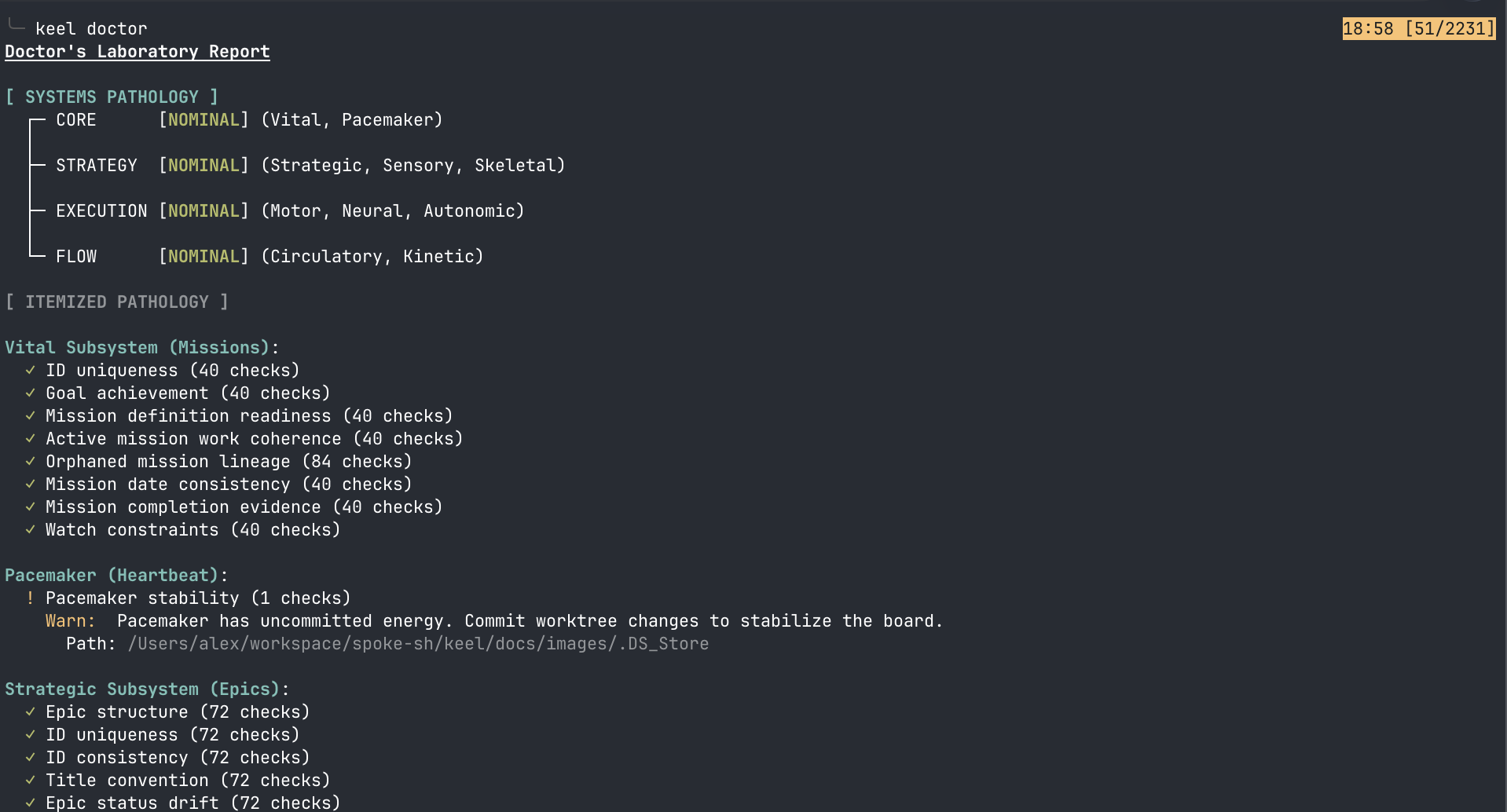

Zero drift as a continuous invariant

Long-running agentic work accumulates structural debt: stories without SRS references, voyages missing design documents, ADRs that governed a context that has since changed, scaffold placeholders that were never filled. In a model-plus-harness system, this drift is invisible until it causes a failure.

In Keel, drift is actively detected and must be resolved before new work proceeds. keel doctor checks structural coherence across the entire board and agents are instructed to run it first, before every session, and to fix what it reports before doing anything else.

keel doctor

# NEURAL: ✓ All story IDs consistent, all ACs complete

# MOTOR: ✗ Voyage 1vuz8jNo3 missing SDD.md content

# STRATEGIC: ✓ All epics have authored PRDs

# SKELETAL: ✗ ADR VDmdmt3q7 is proposed — work in context blocked

# PACEMAKER: ⚠ Worktree has uncommitted energy (2 files modified)

#

# 2 errors, 1 warning — resolve before proceeding

The drift categories are structural, not heuristic. Scaffold drift is the literal presence of unfilled placeholder text. Architectural drift is working in a bounded context with a proposed ADR. Requirement drift is a story missing SRS references. These aren't warnings to consider—they're blocking conditions the state machine treats as hard failures.

This changes the maintenance dynamic for long-running agentic work. Drift doesn't accumulate silently. It surfaces at the next doctor check, requires resolution before new work starts, and gets fixed by the same agents that caused it. An agent that introduces scaffold drift on Monday cleans it up on Tuesday before starting anything new. Entropy is structurally bounded.

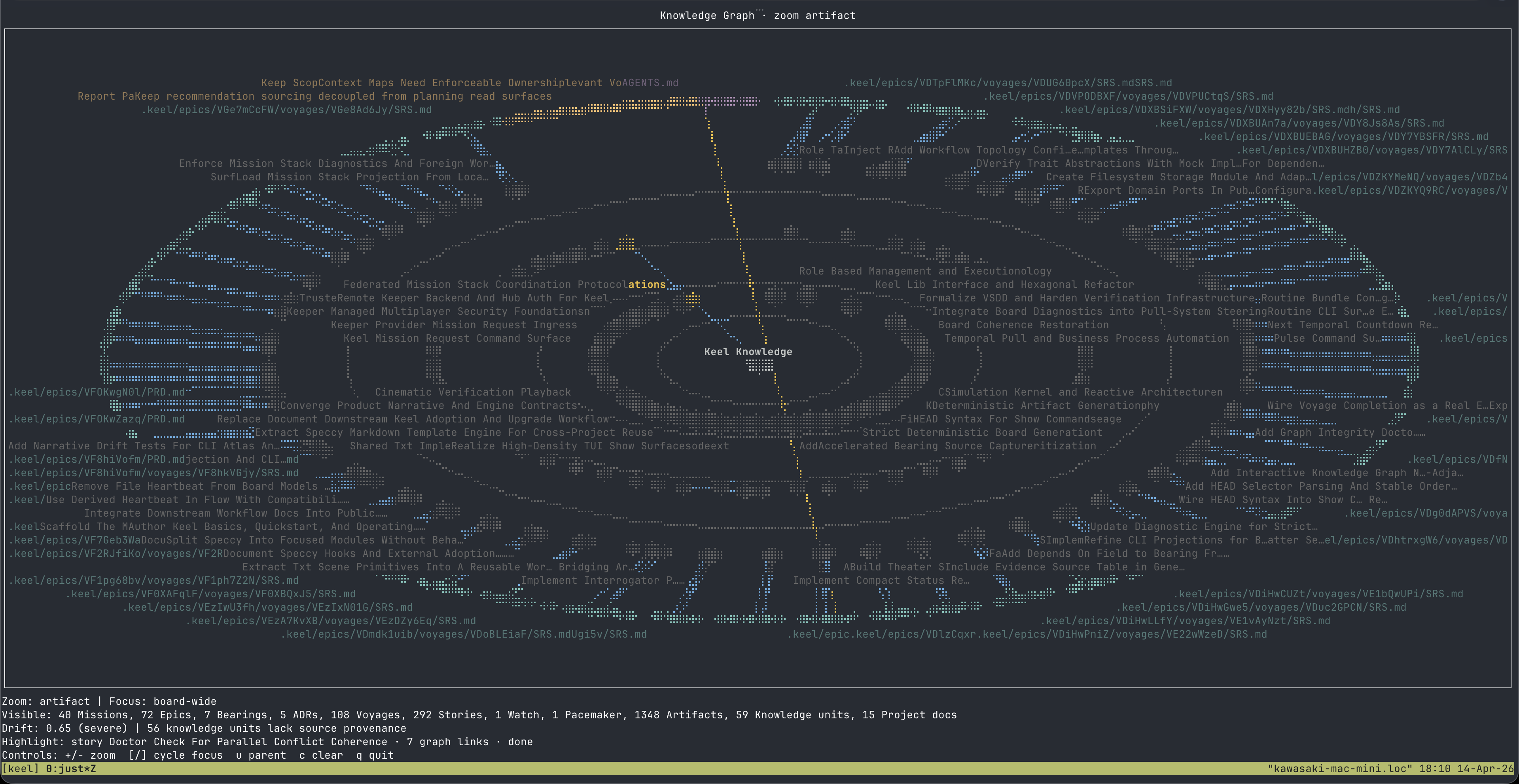

Files as truth, git as the audit log

A subtle but important consequence of the host layer design: all board state lives in markdown files with YAML frontmatter. There's no hidden database, no daemon that can fall out of sync, no service to query. git log is the complete audit trail of every board mutation.

When an agent submits a story, the evidence files and lifecycle transitions are committed to the repository as part of the sealing commit. The git history is the immutable record of what the agent claimed and what evidence it recorded. No retroactive amendment. No separate audit service to maintain.

It also means board state is inspectable by any tool that can read a filesystem. There's no API to integrate with. A human can open .keel/stories/VE3IAG4jZ/README.md and see exactly where that story stands, what evidence was recorded, and which acceptance criteria are satisfied.

For an agentic system, this matters: the model's context window is temporary, but the board state is permanent. Every session starts from verified truth, not reconstructed inference.

The knowledge graph surfaces this artifact web visually. Each node is a file. Each edge is a structural relationship: a story linked to its epic, an epic to its voyage, an ADR scoping a bounded context, an SRS reference anchoring an acceptance criterion. The graph is generated directly from the filesystem, no separate indexing step, no sync lag. What you see is the state of the board at the moment the command runs.

This kind of visualization isn't decorative. For a model navigating the board, the knowledge graph is a legibility primitive. Instead of assembling context by reading dozens of files in sequence, the agent can orient from a single structured view: which artifacts exist, how they relate, and where the graph has gaps. A missing edge is missing evidence. A disconnected node is an orphaned artifact.

High-fidelity CLI output is not a UI nicety for agents, it's how the model reads board state without hallucinating the parts it can't see.This is the deeper argument for investing in CLI visual fidelity in agentic tooling. A human operator adapts to ambiguous output. A model does not: it fills gaps with inference, and inference compounds into drift. When the CLI renders board state with precision: labeled queues, explicit relationship edges, unambiguous status indicators, the model's read of the system matches reality. When it doesn't, the gap between what the model thinks is true and what's actually true becomes the primary failure mode. Visual fidelity in the interface is structural reliability in the agent loop.

What this changes about trust

You verify everything important in agentic systems because you can't be sure the model held to the intent you had when you started the session. That's the actual cost of contextual trust: the human becomes the consistency check, session after session, because nothing else is holding the invariants.

The host layer makes trust structural. You trust the state machine because it enforced the gate checks. You trust the evidence because the transition required it. You trust the scope because the ADRs blocked everything outside it. You trust the audit trail because git holds it immutably.

The model's capability matters, a better model executes delivery lane work more effectively. The harness's coordination matters, a well-designed harness surfaces the right context at the right time. But neither determines whether the work was governed correctly. The host does that independently of both.

The leash isn't a limit on what the model can do. It's a guarantee that what the model does was supposed to happen.The physics are real

The design pattern that emerges from Keel is to place a CLI state machine between every actor and the work. Humans and agents are first-class participants in a governed system. The host defines the physics. The model executes within them. The harness coordinates around them. But neither the model nor the harness makes the rules, the CLI state machine does, persistently, across every session.

This is not a new idea in software engineering. Formal state machines, enforced transitions, and evidence-gated acceptance are standard in high-assurance systems. What's new is applying that discipline to the agentic layer, building the host so that a language model operating at full autonomy is still operating within a structure a human designed and a machine enforces.

The shared way of working doesn't emerge from the model being aligned. It emerges from the physics being real.